At IntraSee we are super excited to announce that version DA-20.1.1 is currently being rolled out to all our customers. As usual, many thanks to Oracle for all their support and collaboration as we utilize their excellent Oracle Digital Assistant (ODA) technology via our Hybrid-Cloud compatible, GDPR compliant, and world leading meta-data driven middleware solution.

Our goal of automating every aspect of Oracle ODA design, build, test, and deployment wouldn’t be possible without having such an awesome partner to work with. So, with that said, here are the highlights for IntraSee DA-20.1.1:

- Integrate Oracle delivered skills into topics that include skills from other sources (ex: IntraSee, client-created, other vendor, etc.). NOTE: includes the ability for a business user to mix and match skills in a topic via the wizard admin tool.

- Include embedded web forms within the Microsoft Teams channel as part of a transactional conversation.

- Other minor UX enhancements to Microsoft Teams channel support for ODA.

- Secondary “fallback” checking for utterances that fail to meet the confidence threshold of ODA.

- Ability to conversationally navigate backwards and forwards between multiple bot flows, maintaining memory of previous answers to questions.

- General enhancements to provide more human-like conversational flows.

- Improvements to automated predictive utterance testing, and automated daily utterance analysis reports.

- Improved performance of all response times.

- Improved dashboard metrics and reporting.

- Additions to the Skills Library (more delivered HCM & Campus skills).

- Add support for new Oracle ODA 20.x features.

Product Update Notes

A key addition for this release was the seamless integration of any ODA skill built by other organizations. Primarily this was to support the really cool set of skills built by the Oracle HCM Cloud team. But it also allows for any skill built on the ODA stack to be included too. Which means clients can build their own bot flows, and not have to worry about utterance training, testing, and matching (including disambiguation). The IntraSee “super skill” will handle this automatically, which takes away the #1 reason chatbot projects fail. With this new addition, any bot flows will accurately be matched regardless of who created them.

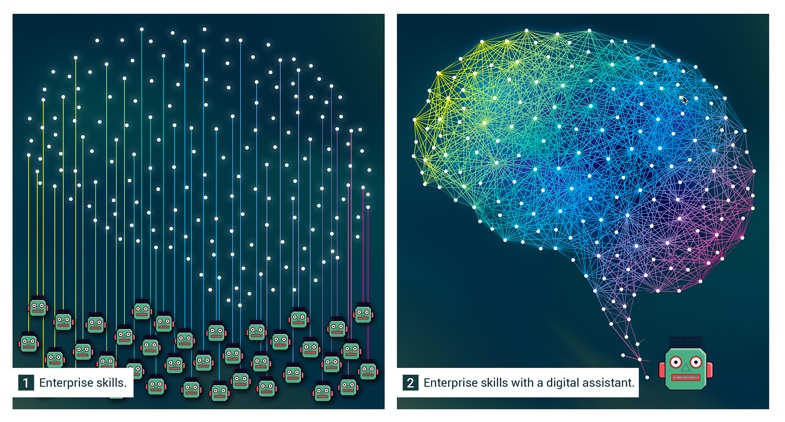

Why is this important? Because we want to enable a box #2 solution, not box #1 (below). This is how the human brain operates. And this is how a “downloadable worker” should operate too.

Figure 1: Box #2 is how an advanced “downloadable worker” is configured. Box #1 is a tower of babel.

Microsoft Teams was another focus for this release. The biggest addition was the capability of embedding advanced web forms within a conversation in MS Teams. This proved useful for things like changing address, bank deposit info and more complex manager transactions. Ex: employee promotion with a salary change.

As always, we constantly make advances with utterance matching. This is job #1 in our world. If you can’t match an utterance to the correct question/answer then it won’t matter how elegant the bot flow is. So, to that end, we raised confidence thresholds as a general best practice and added fallback checking to catch utterances that fell below the threshold, but which we felt had clear matches to multiple entries in the digital assistants Enterprise knowledge. We then ran the results through our disambiguation engine to ensure the user was able to correctly identify what it was they were asking.

This how people talk to people. Clarification is a key aspect of any conversation. It’s not a binary activity, and a lot of grey areas exist with how people state what they want. So advanced matching and disambiguation processes are not a nice to have. It’s a necessity.

The result was better matching of utterances. So, job #1 achieved!

Finally, we continue to make the conversational skills of the digital assistant more sophisticated, and improve on our automated testing processes and dashboard metrics. If you can’t measure something, then how do you know it’s a success? And how do you know you are getting better each week?

We feel like we can’t focus too much on the training, testing, and measuring of digital assistant performance. Your “downloadable worker” needs to be treated like a human worker in many respects. Only instead of bi-annual performance reviews, you need to be constantly evaluating performance every day, and providing weekly statistics to your organization on its growth and maturation.

This is why we make this a focus on every product release.

Contact us below to learn more and setup your own personal demo: